At the end of last year, the Laboratory for AI Security Research (LASR) – a public-private partnership between government, enterprise and academia that’s dedicated to mitigating security risks to and from AI to drive national resilience and foster growth – issued a specific call for UK SMEs addressing AI supply chain security.

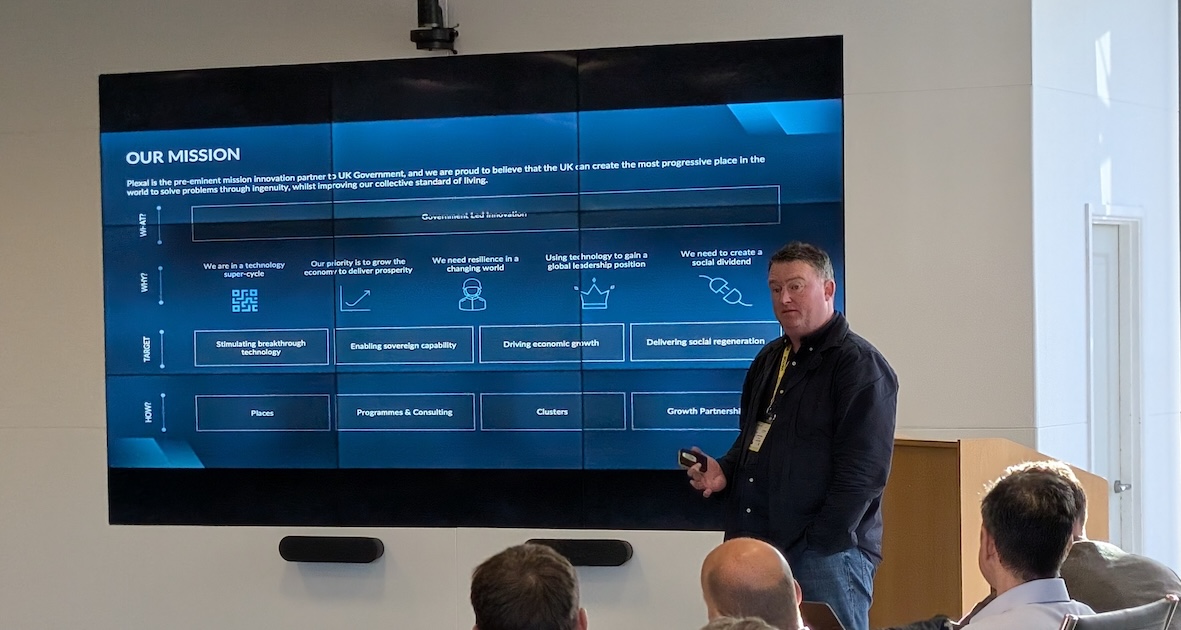

Delivered by Plexal and sponsored by Cisco and HM Government, our outcome‑focused initiative is moving forward as industry, LASR researchers and innovators collaborate to solve near‑term supply chain challenges critical for safe deployment of AI in high‑stakes environments.

Our work is focused on:

1. Secure deployment and monitoring of AI at the edge for critical national infrastructure (CNI)

2. The origin and trustworthiness of AI components

3. The integrity of AI models throughout their lifecycle

Why AI supply chain security matters

Software supply chains now face a new class of risks: pre‑trained AI models that may conceal hidden backdoors, trojans that remain dormant until triggered and AI tools capable of quietly exfiltrating sensitive data. With today’s complex web of dependencies, a single compromised component can put entire systems at risk.

Building confidence in AI therefore requires a foundation of trust, one anchored in robust AI security that safeguards models, infrastructure and data against cyber threats and malicious interference.

The UK’s National Cyber Security Centre’s (NCSC) Annual Review highlights that supply chain compromise remains one of the most significant cyber security risks, as adversaries increasingly target software components and the interconnected dependencies supporting CNI.

As AI capabilities become further embedded across essential services, these risks now extend directly to the models, datasets and infrastructure that AI systems rely on. Strengthening the provenance, integrity and continuous monitoring of AI throughout its lifecycle is becoming central to national resilience.

With this in mind, we’ve selected a cohort of companies that can provide practical, near‑term solutions to these challenges, from securing models deployed at the edge and validating the trustworthiness of AI components to detecting drift and emerging vulnerabilities over time. Together, they help lay the groundwork for safer, more resilient AI systems across the entire supply chain. They are:

Mind Foundry

Mind Foundry is a UK sovereign AI company spun out of the University of Oxford, providing responsible solutions for defence and national security. Mind Foundry’s products and technology services enhance operations with connected intelligence by turning complex sensor data into trusted insights, accelerating decision-making and putting AI in human hands where it matters most.

Secure Agentics

Secure Agentics builds the cyber security products required for a future shaped by AI agents. Its core product is a monitoring and control platform for agentic AI, detecting when agents become misaligned, compromised or behave in unintended ways and intervenes to prevent harm. This enables the deployment and scaling of AI agents with confidence, maximising automation benefits while maintaining security, governance and oversight.

Tikos Technologies

Tikos Technologies is a UK deeptech startup developing an AI assurance platform for defence, CNI and financial Services. Tikos directly supports supply chain security by analysing model internals, tracing neural activation paths and detecting vulnerabilities, hallucinations and potential misuse in models, including LLMs.

Patricia Jamelska, Innovation Lead for LASR Programmes at Plexal, says: “We’re pleased to welcome Mind Foundry, Secure Agentics and Tikos Technologies into this cohort. As AI systems become increasingly embedded across critical services, securing the AI supply chain is no longer a future concern, it’s a present‑day priority.

“While many organisations are advancing AI capabilities, far fewer are addressing the risks that come with them. The practical innovation these SMEs bring is essential to strengthening the UK’s resilience and working closely with them helps us build the evidence base needed to ensure AI is secure and trustworthy.”

About LASR

The LASR partnership comprises world-leading experts from UK organisations including Plexal, University of Oxford, The Alan Turing Institute, Queen’s University Belfast and the UK Government, working with a range of universities and commercial partners as appropriate. By integrating the best minds from academia, industry and government, we conduct cutting-edge research, develop innovative solutions, commercialise outputs and strengthen international cooperation on AI security.